I came to insight analysis with a mixed background. I had been a fundraiser, and because I’d worked in small organisations I’d tried my hand at many types of fundraising. The other side of my experience was from science where I studied psychology and neuroscience and worked on research on those topics. From that I learned scientific method, stats and data.

This is the general purpose methodology that I drew up as I was finding my feet in my non-profit Fundraising Analysis role, a role that was new in my organisation as well. I knew fundraising and fundraisers and I knew data and analysis but this brought those things together in a way that allowed me to explain the work to others.

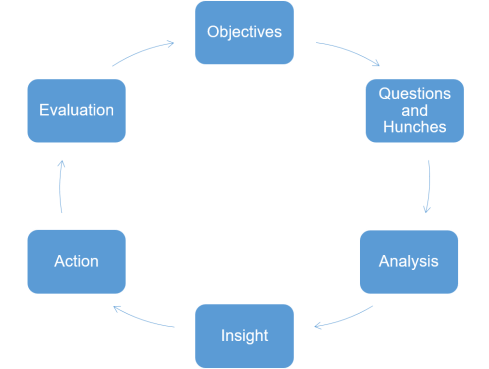

Insight Analysis cycle

What to bear in mind during the process

Objectives:

What do we want to achieve? (overall Goals – increase income/improve donor care)

What do we want to do? (plan for Activities – design a new product, improve a process)

Questions and Hunches:

Questions are often the place people start. There’s nothing wrong with that for brainstorming a starting point, but the sooner it links back to your objectives, the sooner you focus in on what you really need.

Bearing our objectives in mind, what do we already know?

What do we want to know – what will help towards our objectives?

Less is more! The fewer the questions you go into the analysis with, the more focused it will be and the easier to action or iterate onwards from.

Hunches (or hypotheses) about what you think the answers to the questions will be are really important. The move towards ‘insight driven decision making’ is often tagged as the move away from gut feel towards data. I find that using client intuition and experience to form hypotheses and to sense check findings as you go along keeps you on track to useful findings. Hunches are where you start to combine gut feel with the data.

Analysis: this is where you crunch the data. I unpack this in part 2.

Insight:

We have our answers, and a story that we can understand. What do we do now?

Action:

Information from insight going into planning actions.

Evaluation:

What does success look like?

How are we going to measure that?

Do we need any different/additional data capture?

Evaluation is analysis too, checking what happened, iterating back through insight, action and evaluation while you tweak or optimise whatever you were working towards.

And when you’re done – on to New Objectives!

In part 2 we unpack what happens under the hood of analysis.